Eight ways to see inside

A sampler of diagnostics emerging from Stanford

“Please don’t confuse your Google search with my medical degree,” reads one novelty coffee mug. But what if internet searches, in the aggregate, could lead to improved diagnoses?

Take Eric Horvitz’s work. Horvitz, MD, PhD, is a technical fellow at Microsoft Research, where he serves as the managing director of the company’s main research lab. When he and his colleagues look at search logs, they don’t see hypochondriacs. They see people who are individually investigating their symptoms, and collectively telegraphing their syndromes. For example, months before a person is diagnosed with pancreatic cancer, he might search for “back pain.” A little later, “weird weight loss.” And then “itchiness” and “dark urine.” If his search engine has been taught to notice the pattern, it might one day provide an alert to make an appointment with someone who has, yes, a medical degree.

Harnessing the power of big data is just one of the approaches researchers are using today to develop new diagnostic tools. Another trend is to democratize diagnosis by creating inexpensive, easy-to-use devices that can be deployed in the farthest reaches of the globe, or the nearest corner of your living room. And scientists are prototyping gadgets that were once the province of science fiction, including a machine that detects a dozen diseases with one drop of blood. Here are eight innovative ways to figure out what’s going on inside of us.

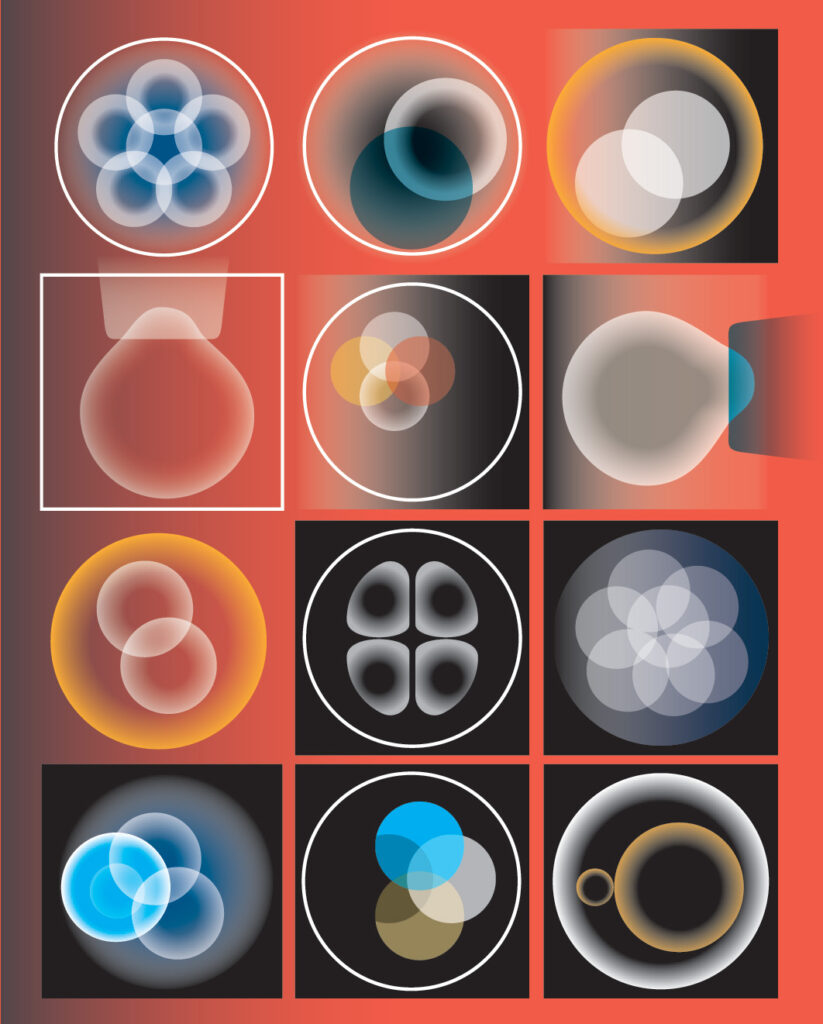

Goldilocks’ embryo

When David Camarillo, PhD, was a graduate student in mechanical engineering at Stanford in 2007, he collaborated briefly with Barry Behr, PhD, on using imaging technology to select the best in vitro-fertilized embryos to transfer into a patient. Something Behr said stuck with him: Some embryos are squishier than others.

“It’s like a Goldilocks ‘just right’ type of thing,” says Behr, a professor of obstetrics and gynecology. When injecting sperm into an egg, embryologists might think, “This is too easy — there’s no resistance,” he says. “Or this is like chewing gum — I can’t break the membrane.”

Both extremes seemed suboptimal, but there was no way to quantify them. “Scientifically, ‘too hard’ or ‘too soft’ is not adequate,” Behr says.

So when Camarillo returned to Stanford in 2012 as an assistant professor of bioengineering, he and Behr decided to scientifically assess squishiness. Or, more precisely, to determine whether an embryo’s viscosity and elasticity signified something about its viability. “Let’s just try taking a pipette and sucking on the embryo a little bit to see how much it deforms,” Camarillo proposed.

That method, called micropipette aspiration, is quick, minimally invasive and commonly used to assess cell viscoelasticity. “We compare it to a gentle squeeze — we call it the embryo hug,” says Livia Zarnescu Yanez, who earned her PhD this year in Camarillo’s lab and is the lead author of a paper published in February in Nature Communications describing the work.

The researchers found that both mouse and human embryos within a certain range of viscoelasticity — not too hard and not too soft — are more likely to form healthy blastocysts, the ball of cells that begins to form about five days after fertilization. They could predict with 90 percent accuracy which embryos would do so. And when they implanted the mouse embryos into mice, those classified as viable were 50 percent more likely to result in live births. Clinical trials in humans are underway, and the researchers plan to start a company to put their findings into the marketplace.

‘The long-term future will be identifying a good egg and a good sperm and making a good embryo.’

“I think it could change how we do IVF,” says Behr. The trend in infertility treatment is already to implant a single embryo, but this could increase the likelihood that that embryo will develop into a healthy baby. It could allow doctors to set expectations for patients whose embryos are unlikely to be viable. And it could enable embryologists to fertilize fewer eggs in the first place, thereby reducing the number of couples who must grapple with the ethical question of what to do with embryos they don’t plan to use.

“Most of what we think we’re measuring is the egg,” says Yanez. The researchers are confirming that their method of assessing squishiness works as well for eggs as it does for embryos. If it does, it will benefit egg-freezing patients as well as IVF patients.

“There is no egg viability test, and we feel that if we can establish our correlation between the viscoelastic properties and the egg’s ability to be fertilized, it’s going to have far greater value,” Behr says. “The long-term future will be identifying a good egg and a good sperm and making a good embryo.”

The searchers

Eric Horvitz was talking on the phone with his childhood friend Ron when Ron mentioned he had been feeling oddly itchy lately. Horvitz, MD, PhD, probed a little about other symptoms, then suggested his friend see a doctor. Ron was diagnosed with pancreatic cancer within a month, and died within a year.

“He was relaying nonspecific symptoms to me,” Horvitz says. “Pancreatic and lung cancers are devastating because by the time the diagnosis is made, it is often too late.” Horvitz wondered if the patients were leaving clues earlier. And he knew just where to look: their web searches.

Horvitz and his Microsoft colleagues identified anonymous users whose search queries provided strong evidence of a recent diagnosis of pancreatic cancer. They then went back several months in the search logs and found that many of those users had searched for symptoms such as back pain, abdominal pain, itching, weight loss, light-colored or floating bowel movements, slightly yellow eyes or skin, and dark yellow urine.

“Separately these might not worry someone enough to see a doctor,” says Horvitz, “but is there a temporal fingerprint that would be informative to a machine-learning algorithm with thousands of terabytes of data?” It turns out there is: By examining the patterns of symptoms in a recent feasibility study, the researchers were able to predict up to 15 percent of those whose searches would subsequently indicate that they’d been diagnosed with pancreatic cancer, while maintaining a low false-positive rate. They are now working on a method to verify and extend their findings by asking recently diagnosed patients for permission to correlate their search logs with their electronic health records.

Horvitz has collaborated with Stanford researchers Russ Altman, MD, PhD, professor of bioengineering, of genetics and of medicine (the two are longtime friends from their days as Stanford graduate students), and Nigam Shah, MD, PhD, associate professor of medicine and of biomedical data science, to analyze search logs for adverse effects of medications. For example, in 2011, Altman’s lab demonstrated that two commonly prescribed drugs taken in combination, the antidepressant paroxetine (Paxil) and the statin pravastatin (Pravachol), can cause hyperglycemia. The researchers then went back into the search logs for 2010 and found that users were telegraphing this finding: People who conducted searches for both drugs over the course of the year were more likely to search for “diabetes words” — 50 plain-spoken phrases like “fatigue” or “peeing a lot” — than people who searched for only one of the drugs.

Horvitz and Altman emphasize that the goal isn’t for your search engine to diagnose you or alert you to algorithmically detected drug interactions. But it might nudge you to go to the doctor, or serve as a complement to the Food and Drug Administration’s adverse event reporting system.

The use of search-log data for medical research garners two main criticisms, Altman says: the denominator problem (“You don’t know how many people are taking the drug and doing fine, or taking the drug at all”) and the numerator problem (“We know not everyone reports their side effects”). Nevertheless, he says, there are insights to be gained. People are candid online — Horvitz calls it “whispering to your search engine” — and the data is voluminous and essentially free. Plus, Altman adds, there are ways to control for biases in the data. “An intelligent person analyzing it could make inferences,” he says. “It’s also possible you could be dead wrong.”

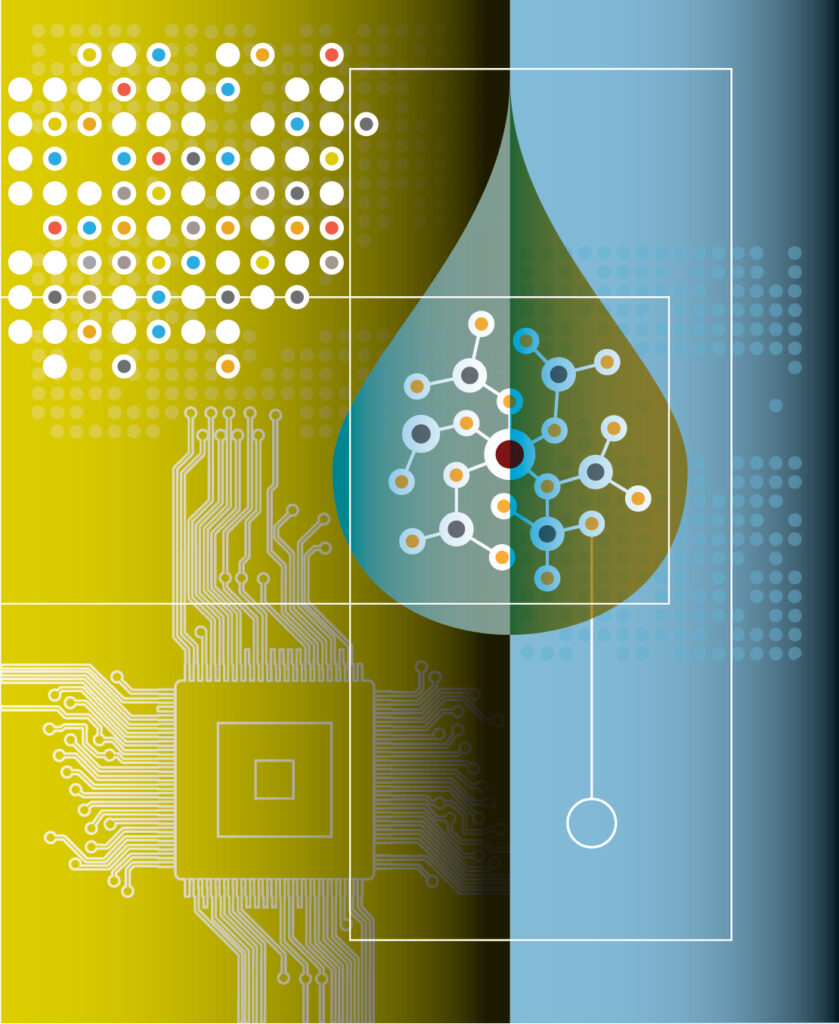

Magnetic attraction

When Shan Wang, PhD, joined Stanford’s Department of Materials Science and Engineering in 1993, the magnetics expert didn’t expect to develop diagnostic devices. “I wanted to do data storage,” he says. “I still have a little bit of research going on spintronics.” But the pull of detecting human disease has proven stronger. “There are so many unmet needs in medicine,” he says. “There are too many to work on. We have to pick and choose carefully.”

The one he chose was to detect and quantify cancer biomarkers — the proteins, nucleic acids and cells associated with cancer progression. “Cancer is the area that is lagging behind heart-disease diagnosis,” Wang says. “We feel it’s the high-impact area in which we can make a difference.”

Wang and his colleagues developed the magneto-nanosensor, a device that detects cancer proteins with sensitivity hundreds of times greater than the current commercial method, the enzyme-linked immunosorbent assay, or ELISA.

The miniature magneto-nanosensor chip, less than half the size of a dime, has either 64 or 80 “capture antibodies” on it. They can all be antibodies that bind to the same biomarker, or they can be intentionally varied so that the array of sensors measures more than one biomarker at the same time. A sample is added — a drop of whole blood, plasma, serum, urine or saliva — followed by a second batch of antibodies tagged with magnetic nanoparticles. These antibodies also bind to the biomarkers in the sample, creating a sandwich structure. Finally, the device measures the stray magnetic field produced by the nanotags and determines how much of each biomarker is present.

“We want to attack all cancer; that’s our mission,” Wang says. He has co-founded a company, MagArray, to bring the technology to market. Once it is certified as a clinical laboratory, the company will begin by offering testing services for prostate and lung cancer.

The magneto-nanosensor can also be used to detect diseases beyond cancer, and may someday be deployed in medical clinics and even in patients’ homes. “I’m very drawn to point-of-care testing,” Wang says. “With essentially one finger prick, you can detect multiple diseases, multiple biomarkers. We are actually talking about what Theranos wanted to do.”

Wang’s lab has joined forces with the digital-health company CloudDX to enter the Qualcomm Tricorder XPrize competition. The competition promises $10 million to the entrant who creates a device that best measures 13 health conditions and five vital signs, much like the tricorder Dr. McCoy used to diagnose patients on Star Trek. CloudDX’s prototype incorporates the core technology of the magneto-nanosensor; Wang’s lab contributed the cartridge where the sample is inserted. It is one of seven finalists for the prize, which will be awarded in early 2017.

“I feel like I’m in the best field right now,” Wang says. “It’s tough to get funding and to get things to work well — there are lots of challenges — but in terms of promise, it’s exhilarating.”

Iron works

Pediatric cancer patients make an awful lot of visits to radiology, undergoing scans to track the progress of their treatment and to look for recurrences and metastases. “The current standard of care for a lot of our cancer patients is PET/CT,” or positron emission tomography/computed tomography, “and that is associated with a lot of radiation exposure,” says Heike Daldrup-Link, MD, associate professor of radiology. “One CT scan wouldn’t be a problem, but our oncology patients get a lot of these scans before, during and after treatment, and then potentially for the rest of their life.”

Some research suggests the accumulated radiation exposure from multiple CT scans could lead to secondary cancers later in life. “Secondary cancers usually develop after decades,” Daldrup-Link says. “So a 70-year-old may not encounter their secondary cancer, but a young patient would.”

Daldrup-Link has developed a technique to scan the whole body of pediatric cancer patients with magnetic resonance imaging, which is radiation-free. To do this, she injects a novel contrast agent — iron nanoparticles, known as ferumoxytol — into the patient’s bloodstream. Ferumoxytol is typically used for the treatment of anemia.

“We can beautifully see all the blood vessels everywhere,” says Daldrup-Link, looking at a scan from an MRI with ferumoxytol. “Our surgeons can nicely relate tumor deposits to the vessels. I think we really get a soft-tissue contrast that is not otherwise available.”

Daldrup-Link has shown that combining this MRI technique with PET reduces radiation exposure by 77 percent compared with PET/CT. She and a nationwide network of colleagues are now evaluating different tumor types to see which patients should be scanned with PET/CT, PET/MRI or MRI only. In addition to minimizing radiation exposure, Daldrup-Link strives to ensure patients have to climb into only one machine each time they are scanned. “For our young patients, it’s huge to have just one scan instead of two or three,” she says. “We believe PET/MRI can provide that.”

‘Gadolinium is a heavy metal, so it’s not very natural to our body, whereas an iron product is basically a concentrated steak, or a ton of strawberries.’

Ferumoxytol also has advantages over gadolinium chelates, the traditional MRI contrast agents. Ferumoxytol remains visible in the blood vessels for at least 24 hours, whereas gadolinium peaks quickly, making it challenging to scan both the primary tumor and the entire body of sometimes wiggly patients.

Also, recent studies show gadolinium may deposit in the brain. Hearing that their child will receive an iron supplement instead, Daldrup-Link says, comes as welcome news to worried parents. “Gadolinium is a heavy metal, so it’s not very natural to our body, whereas an iron product is basically a concentrated steak, or a ton of strawberries.” There is a risk that some patients may be allergic to iron compounds such as ferumoxytol, so Daldrup-Link follows an FDA protocol to monitor patients closely for signs of allergic reactions and treat them if they occur.

Iron nanoparticles may have other applications, as well. In tumors, they are taken up by immune cells called macrophages, which may enable radiologists to track the success of cancer immunotherapy treatment. In mice, “we do see the retention of our iron nanoparticles is reduced in those that have been treated with therapies that deplete cancer-promoting immune cells,” Daldrup-Link says. The nanoparticles may even help fight cancer: In a surprise finding in another mouse study, they activated cancer-fighting macrophages to destroy tumor cells.

Follow the crowd

Joseph Liao is looking at a video on his computer. “It’s a papillary tumor — it looks like a broccoli — but then you kind of slice it like a loaf of bread,” says Liao, MD, associate professor of urology at Stanford, gesturing as the video proceeds to display cross-

sections of the tumor.

Liao was the first urologist to use confocal laser endomicroscopy, in which a fiber-optic probe is inserted into a standard endoscope, to create these “optical biopsies.” Surgeons can use optical biopsies to assess tumors in real time, without waiting for pathologists. “Even if we get it back in an hour, that’s actually too late,” Liao says. “The case is done by then.”

Techniques like this are particularly promising for bladder cancer, which is the most expensive cancer to treat on a per-patient basis because of the lifelong surveillance required. “One of the challenges of bladder cancer is that it has a very high recurrence rate, and that may stem in part from the cancer biology itself, but also it’s the way that we cut these things out,” Liao says. “The better we can see, the better we can cut them out. The better we can cut them out, the lower the likelihood it’s going to recur.”

Using CLE for bladder cancer is in its infancy, and Liao has turned to some unconventional sources to help him determine how best to train others in the technique. First, he and his colleagues determined which features of the optical images indicated high-grade disease, low-grade disease or a benign condition and developed a training module. Then, they had novice observers — from urologists to pathologists to researchers with doctorates in nonmedical fields — undergo the training and assess 32 images.

Overall, the observers demonstrated moderate agreement in cancer diagnosis and grading, comparable to pathologists in other studies. Plus, there was a twist: “The engineers did the best,” Liao says. “They know nothing about clinical medicine, but if you want them to do pattern recognition, they’re very good at it.”

Liao and his colleagues then refined their training module and presented it to a group of crowdsourced workers: 602 people willing to watch videos of optical biopsies for 50 cents each through Amazon’s Mechanical Turk platform. “They were able to correctly diagnose cancer in 11 out of 12 cases,” says Liao. “What was cool was that they generated this much information in less than 10 hours.” In the previous study of novice observers, gathering the data had taken months.

The use of crowdsourcing to identify cancer has piqued much curiosity. “Is the idea piping images in real time while you’re in the operating room and asking the crowd to help you decide?” Liao asks. “No. That’s not the point. We’re not trying to ask a medically naive crowd to help us diagnose cancer.” The point is to learn how people come to discern the valuable information in the images — what they master easily and what they don’t — and improve training accordingly. And the methodology is by no means limited to the CLE technique, Liao says. “The bigger question is how we can use crowdsourcing more effectively as an educational tool, as a mechanism for review, training and recertification.”

Eye spy

When David Myung, MD, PhD, was a first-year ophthalmology resident at Stanford in 2012, he frequently found himself in the emergency room in the middle of the night, wishing he could just send a picture of the eye in front of him to his supervisors. “I would see a traumatic eye injury and want to be able to share an image of it with my senior resident or my attending, but instead could only describe it over the phone in words,” says Myung, now a member of the ophthalmology faculty at the Veterans Affairs Palo Alto Health Care System and co-director of the Ophthalmic Innovation Program at the Byers Eye Institute at Stanford. He tried using the camera on his iPhone, but it didn’t have the right optics or lighting. “There was no way to take a good photo of the front or the back of the eye.”

Meanwhile, assistant professor of ophthalmology Robert Chang, MD, had been experimenting with ways to document findings in the clinic by attaching an iPhone to a slit lamp, which uses a narrow beam of light and high-quality magnifying lenses to examine the inside of the eye. With the advent of the iPhone 4S, Chang and Myung were coming to the same conclusion: The camera now took photos of sufficient resolution to make some medical decisions. The two teamed up to develop an inexpensive, pocket-size smartphone adapter that bypassed the slit lamp, harnessing the power of the iPhone camera and standard retinal-exam lenses that practitioners already own.

‘The first functioning prototype had a piece of Lego, parts from Amazon, some electrical tape and a small LED flashlight.’

They began by scrounging for parts. “I started tinkering in my living room while my kids were running around,” says Myung, a former medical device engineer. “The first functioning prototype had a piece of Lego, parts from Amazon, some electrical tape and a small LED flashlight.”

Chang pitched the project at the 2013 StartX Med Innovation Challenge weekend hackathon, where he recruited mechanical engineering graduate student Alexandre Jais to the project. Jais not only provided expertise; he also had a 3D printer in his dorm room, which enabled the team to rapidly refine prototypes. With seed funding from two School of Medicine programs, they developed an adjustable adapter with its own custom light source, so it could be attached to the ever-evolving sizes and shapes of smartphones “like a selfie stick,” Myung says.

The device is now sold by DigiSight Technologies as the Paxos Scope, under a license from Stanford. (Myung is a design consultant to DigiSight.) To ensure patient privacy, an app transmits encrypted images through a cloud-based platform.

The researchers envision the device being used outside of eye clinics to determine whether referrals to ophthalmologists are warranted. Chang recently led a feasibility study in Hyderabad, India, which demonstrated that technicians could easily learn to capture high-quality eye images with the device. A study led by professor of ophthalmology Mark Blumenkranz, MD, showed that the device captures photos comparable to in-office ophthalmic exams for diabetic eye screening.

The researchers are particularly excited about the device’s potential value in remote areas of the world. The ASCRS Foundation has donated 12 Paxos units through the Himalayan Cataract Project to the Tilganga Eye Centre in Kathmandu, Nepal. “In the past, ophthalmic technicians at outlying clinics hundreds of miles from Tilganga have had to decide when to refer patients,” says Myung. “It’s a full day’s ride through mountainous terrain, and the trip involves paying for a bus, taking time out of work and leaving family just to be seen briefly in the tertiary eye clinic.” Now, the technicians can transmit Paxos Scope photos to Tilganga, where physicians can advise them on which patients need to be seen right away — and which can wait.

Better pill to swallow

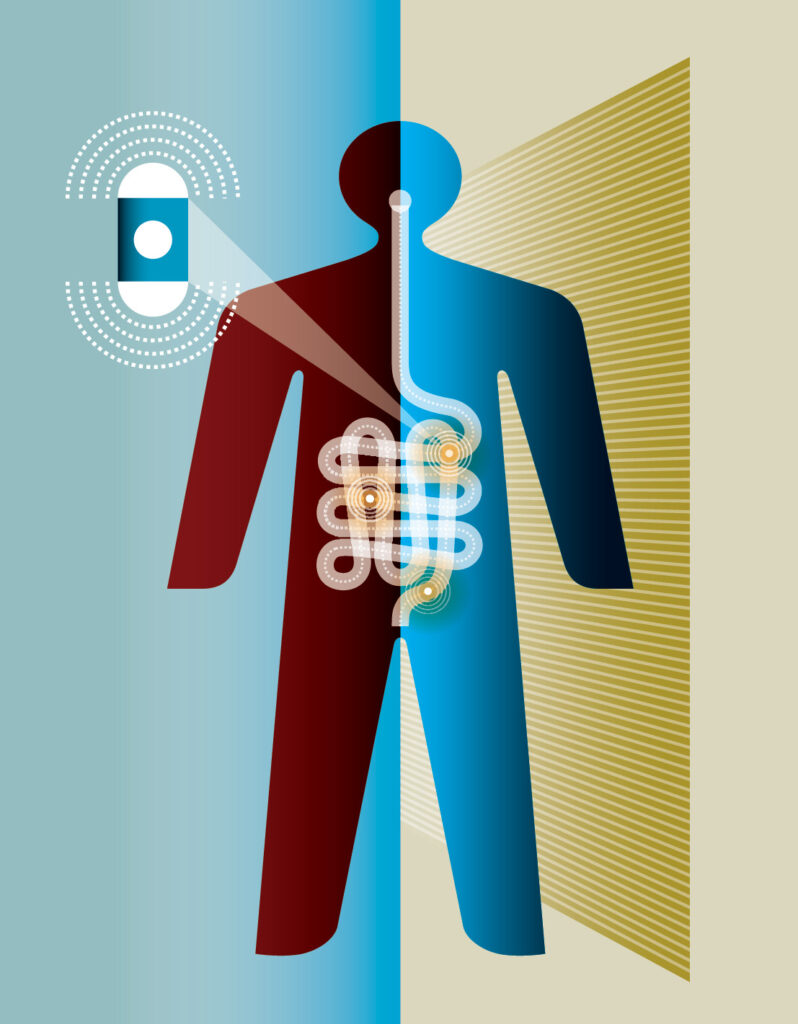

Let’s say you want to know what’s going on inside the small intestine — maybe assess it for cancer or bleeding. A gastrointestinal endoscopy won’t get down far enough, and a colonoscopy won’t get up high enough. You could use video capsule endoscopy, in which the patient swallows a pill cam, but it has only a 170-degree field of view, and takes photos just of the tissue on the surface. You could miss a tumor that way.

Stanford researchers are designing an ultrasound pill cam that would solve these problems. It would provide a 360-degree view of patients’ innards, to a depth of 5 centimeters, enabling physicians to detect and stage cancers of the small intestine. If it could be convinced to dwell in the stomach for an hour, it also might be able to detect pancreatic cancer and pancreatitis.

“Optical pill cams don’t know where they are,” says Butrus Khuri-Yakub, PhD, professor of electrical engineering, who is leading the effort in collaboration with several Stanford colleagues, including professors of radiology Brooke Jeffrey, MD, and Eric Olcott, MD, and assistant professor of electrical engineering Amin Arbabian, PhD. “This sees the organs outside. It can presumably know where it is. Eventually, one can imagine modalities to put propulsion in it to control the location, transit and speed of transit.”

The device uses a capacitative micromachined ultrasonic transducer, a miniaturized type of ultrasound developed in Khuri-Yakub’s lab, adapted to wrap around a capsule that contains an integrated circuit, a transmitter, an antenna and two small batteries. It will capture data from its eight-hour journey through the gut at a rate of four frames per second, transmitting it to an external receiver worn on the waist. (The other option was to extract the pill after it was excreted, “but we don’t want to get involved in this,” says Gerard Touma, one of three graduate students in Khuri-Yakub’s lab working on the project, along with Farah Memon and Junyi Wang. “We just want to stick to wirelessly transmitting it outside the body.”)

There are many steps before the device is ready for market — a process Khuri-Yakub estimates could take five years. After the prototype is complete, it will undergo simulations in the lab, then proceed to bench-top testing. “We’ll go to the butcher shop and buy an intestine and put a pill in it,” he says. Assuming all goes well, the researchers would proceed to animal and human testing and pursue FDA approval. Khuri-Yakub is optimistic about the latter, pointing out that this device uses components similar to approved pill cams and that ultrasound is already being used endoscopically.

“There are a lot of challenges,” Khuri-Yakub says. “It’s a major accomplishment to build a whole ultrasound system on a chip 6 millimeters by 6 millimeters. But they are not unsurmountable challenges. Hopefully we will knock them over one by one.”

Pathologists’ prognosticator

Lung cancer is one of the most prevalent and deadly cancers worldwide, but pathologists looking at slides of tumor tissue under a microscope can’t effectively predict how long individual patients will live. Nor is it easy for pathologists to distinguish between the two most common types of lung cancer, which has implications for patients’ treatment.

Enter the computer.

Using a machine-learning algorithm, Stanford researchers led by Michael Snyder, PhD, professor and chair of genetics, have developed a software program that can distinguish between adenocarcinoma and squamous cell carcinoma, and predict how long patients will live, with up to 85 percent accuracy.

The researchers fed data from more than 2,000 patients into the program: their slide images, the grade and stage of their tumors as determined by pathologists, and how long they lived after diagnosis. They trained the program to examine almost 10,000 characteristics of lung-tumor tissue — far more than a human eye can detect. The machine-learning algorithm then identified 240 of those characteristics that best differentiated adenocarcinoma from squamous cell carcinoma, 60 characteristics that predicted how long an adenocarcinoma patient would survive after diagnosis and 15 that predicted how long a squamous cell carcinoma patient would survive. The researchers validated their findings on data from a separate group of patients.

“It’s nice to have automated processing do this rather than have the subjectivity that pervades medicine,” Snyder says. Two pathologists assessing the same lung-cancer slide agree about 60 percent of the time. But even if they agreed more frequently, their analysis wouldn’t reveal how long a patient might live. For example, more than half of stage-1 adenocarcinoma patients die within five years of diagnosis, but 15 percent of them live more than 10 years. Having a better sense of patients’ prognosis, which the software provides, “will affect how aggressively you treat cancers,” Snyder says.

The machine-learning approach should work well for any organ tumor. “Moving into other cancers is a no-brainer,” Snyder says. The researchers will tackle ovarian cancer next, “because it is pretty deadly.”

Snyder sees the software as an important aid, but not a replacement, for human pathologists. “My own view is that it should be used every time, right off the bat,” he says. “Pathologists will still review images, but it reduces the chances of a mistake. It’s easier to confirm what the machine has done than to do it de novo.” Plus, he says, it’s cost-effective: “In the long run, it should save a lot of money. Machines are faster than people, and pathologists cost a lot more.”

Snyder would like to see automated evaluation of tumor slides deployed in the clinic within two years — most likely via companies that sell microscopes, which are interested in building the software into their platform. Combining this technique with other advances in understanding tumors at the molecular level, such as biochemical, genomic, transcriptomic and proteomic assays, will provide a “more comprehensive view of cancer,” he says. “I think that will be the future.”