Can we get along?

Humans versus artificial intelligence

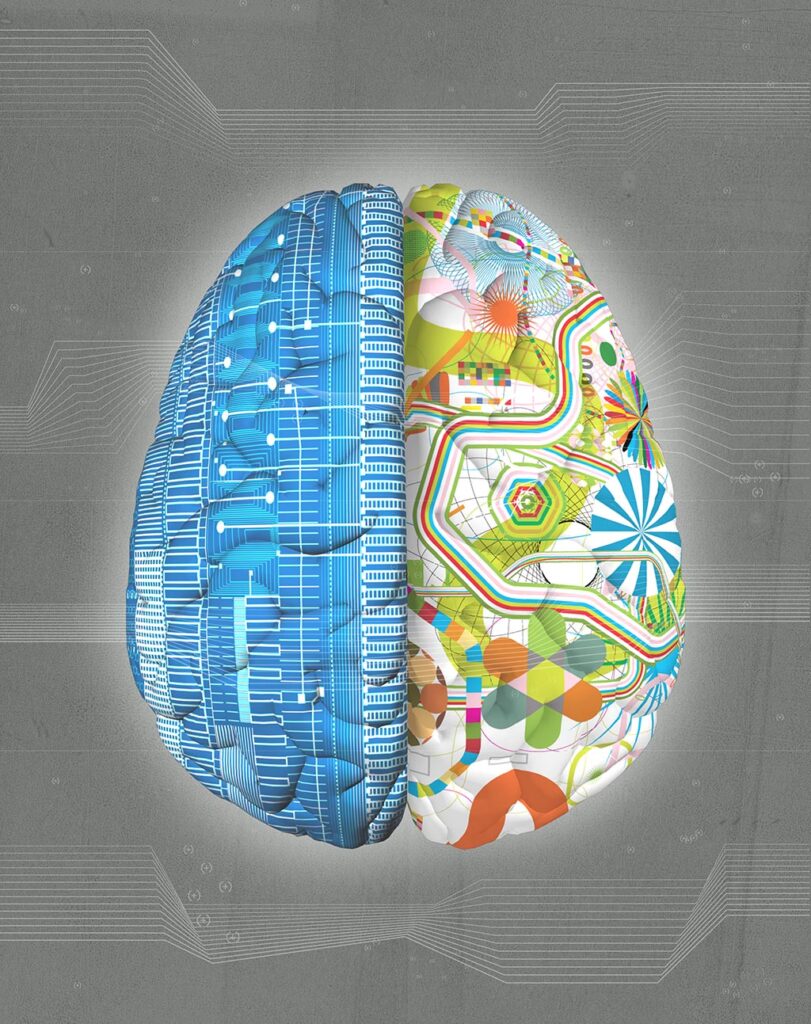

The human brain, it’s been remarked, is the most astonishingly complicated thing in the universe. But then again, it’s the human brain that’s saying this.

Now, that pat-yourself-on-the-back, flesh-and-blood braggart of a human brain has gone and invented a disembodied Mini-Me made out of silicon and known as artificial intelligence or AI. It — notwithstanding an internal dialogue that boils down to a bunch of ones and zeros — might be getting smarter than us.

What did we get ourselves into?

Many among us are wondering: Will AI surpass and maybe even supplant us? Will it wind up becoming sentient, like us? Might it ultimately resemble an advanced version of us?

Curious as to what experts on the human brain are thinking about all of this, Stanford Medicine queried these four neuroscientists who have plumbed the depths of the brain and explored its workings and its secrets.

Ivan Soltesz, PhD, the James R. Doty Professor of Neurosurgery and Neurosciences. Soltesz’s explorations of brain physiology and cognitive simulations have extended to his assembly of a high-resolution computer model of the human hippocampus, a key brain structure critical to memory and spatial navigation.

Lisa Giocomo, PhD, professor of neurobiology. Giocomo has done pioneering research on grid cells, a particular class of neurons located in a part of the brain called the entorhinal cortex. Grid cells create the navigational maps we use to guide us through three-dimensional space. They are probably involved in other complex calculations as well.

Josef Parvizi, MD, PhD, professor of neurology and neurological sciences. Parvizi has used electrical stimulation of specific structures and circuits in the human brain to explore and map the effects of such focused stimulation on consciousness — such as alterations in facial perception, recognition of numerals and one’s sense of one’s bodily self.

Bill Newsome, PhD, professor of neurobiology, the Harman Family Provostial Professor, and founding and former director of the Stanford Wu Tsai Neurosciences Institute. Newsome’s research has focused on the neuronal processes involved in visual perception and visually guided behavior.

The scientists’ answers to our questions spanned the gamut from wariness to hope. Here’s what they had to say:

What, if anything, can the human brain do that for AI might be difficult or impossible?

Ivan Soltesz: I am of the view that AI is eventually going to be able to do everything that humans can, and that this will happen faster than we think. There is no reason AI could not learn, for instance, dark humor, or one-shot learning (as opposed to learning based on lots of repetitions of individual “cat versus dog” examples). And, conscious or not, it could probably also learn to “deliberately” act “silly” to hide the machine behind the mask and appear childlike. And so on.

The one massive limitation for current AI systems is the need for powerful computers and thus massive energy usage. But please note: I have a grant from the National Science Foundation whose central motivation is to develop new computers that can run on sugar, just like our brains do. The future could also hold other solutions for energy sources. So, even this energy limitation of AI systems will likely be gone very soon.

And while cats currently rule both the web and real-life humans, AI will be able to learn how to pet a cat better than humans can do it, so there goes that advantage, too.

Lisa Giocomo: One incredible feature of the human brain is our ability to encounter a completely new scenario and use prior knowledge to rapidly and continuously adapt to the new scenario — even if it contains sensory, emotional or social stimuli we haven’t encountered before.

Human brains are also exquisitely capable of basing our decisions on potential long-term consequences, even when these may occur over very long time scales (years, decades, lifetimes). This allows us to, for example, not just react to stimuli but actively suppress our reaction based on our knowledge of consequences.

We are only at the very start of this journey with AI — it will get better, faster, more accurate, less prone to confabulation over time.

We humans are conscious. Does that give us a survival advantage vis-à-vis artificial intelligence?

Josef Parvizi: Oh, hell yes! Without consciousness we would have no feelings of hunger, thirst, sexual desire, fatigue and fear. You tell me if these feelings have no survival advantage? Be my guest, and say no. The whole world will bet against you.

What is consciousness, anyhow?

Bill Newsome: It’s hard to say what consciousness is when it’s so unapproachable from neuroscientists’ standard reductionist approach. As philosopher Thomas Nagel has argued: Our contemporary science is inherently third-person, but consciousness is inherently first-person. Anything that takes us away from the first person takes us further away from (not closer to) the primary phenomenon we’re trying to understand. So I (along with others) think there is a serious question whether our third-person science can fully understand consciousness.

Can AI become conscious? What would that take?

Giocomo: It’s possible that our metrics for identifying consciousness may be, at some point, inadequate to truly differentiate between the human brain and AI.

Newsome: Whether machines will become conscious is an interesting question, but given how little we know about the physical basis of human consciousness, how can we say anything at all worthwhile about machines? Our digital computers are based on architectures and modes of computing that are totally different from those of biological brains.

Parvizi: For AI to be potentially conscious as we know it, in other words, for AI to be potentially conscious like a human being, the AI algorithm has to be organized like the human brain, it has to be born and raised in a social environment featuring continuous interaction with others, and it has to reside in a biological organism identical to a human’s frail body, with its bladder getting full and urging it to run to the bathroom, or its flesh hurting because it just touched a super-hot surface.

If you re-create a humanlike organism and put a human-brain-like organ in it, there you go: You have a conscious AI. But it is no longer called AI; it is called a cloned human!

Does consciousness require connections to a body?

Newsome: I believe consciousness is a biological phenomenon. One thing I feel pretty sure of is that no AI will become conscious in the human or even nonhuman animal sense until it is loaded into mobile bodies and has to work out the really big problems of survival (energy extraction and conservation, competition); reproduction; and the risks, benefits and norms that come along with advanced social cooperation. I think reward, aversion, motivation, etc., are required for the kind of human and to some extent animal consciousnesses that we are familiar with.

Appetites and desires serve fundamental organismal goals and purposes, and I have no idea how a machine can be endowed with these kinds of subjective feelings. Appetite and desire can be simulated, I suppose, as cost functions (or something like that), but does the simulation approximate the real thing, or are the two totally different? Can a machine have goals and purposes other than what is programmed into them by a human?

I don’t see how this can happen with the current style of AI, but if AI is indeed loaded onto mobile bodies and faced with solving the problems of survival, perhaps the AI could, over time, modify its human-given motivations and goals and develop its own. Who knows?

Despite my skepticism, I do share the uneasy sense that with AI we are at the threshold of a new, very uncertain age in human and scientific history. While AI may not acquire a human form of consciousness, it may become conscious in ways that are incomprehensible to us. There may be worlds of consciousnesses out there just waiting to be realized in complex, learning beings. Again, who knows?

Can we coexist? Can we all just get along?

Soltesz: My only hope for what I admit is my otherwise dark vision of our future is the fact that we as humans have had nukes for decades by now but so far have resisted blowing up the world, so maybe humans will agree to limit AI applications in a practical and effective way.

One other crazy thought: It appears curious to me that we arrive at the dawn of the AI age around the same time as we enter the age of deep-space human travel (plus or minus a few years or maybe a decade). So perhaps humans and AI systems can “agree” right from the start to divide the galaxy into distinct domains of neighborly coexistence.