Medicine’s AI boom

The Stanford impact

Outside the Stanford Health Care office of data scientist Nigam Shah hangs an antiquated memento from the original wave of artificial intelligence hype of the mid-1960s. Yes, the ’60s.

Against a backdrop of free love and Vietnam War acrimony, the first AI wave washed over Stanford University and numerous other academic institutions with hardly a ripple felt by a distracted outside world.

Its arrival in the early days of Silicon Valley coincided with that of two pioneering computer scientists who came to help Stanford launch the country’s first “super computer” for AI in medicine. Housed at the Lane Library, it was called — as the stained-glass sign in shades of oceanic blue now hanging in Shah’s office reads — SUMEX-AIM, short for Stanford University Medical Experimental Computer for Artificial Intelligence in Medicine.

The term “artificial intelligence” had been coined a decade earlier by mathematics and computer science professor John McCarthy, PhD, who came west from MIT to launch the influential Stanford Artificial Intelligence Laboratory in 1963.

In other words, even with all the buzz surrounding the current AI explosion, it’s really nothing new — especially at Stanford.

“If you poll the 1,200 faculty in the School of Medicine, I’d be surprised if more than 10% know about any of Stanford’s AI history,” said Shah, MBBS, PhD, professor of medicine and of biomedical data science and chief data scientist for Stanford Health Care. “A lot of people think this right now is the first AI hype cycle.”

Index of topics in this article (plus a glossary):

It helps explain why Shah, who has been terabytes-deep in machine learning and neural networks for decades, takes his duties as an AI historian and pragmatist seriously. He wants newbies just tuning in to understand the context when deciphering what has deep relevance and what is just another round of futuristic noise.

Shah describes this as a moment of both high frenzy and immense opportunity, with a venture-capital-fueled rush to deliver applications with lasting value — a goal he estimates only 5% to 10% of the applications are hitting in today’s influencer-inspired culture that seeks “breakthroughs every 24 hours.” Through that haze of ambition, real-world innovations in medicine are emerging. They are just more subtle than sexy; more incremental than game-changing.

As Stanford Health Care’s AI vetter-in-chief, Shah is watching closely. If algorithms are designed right and serve a useful purpose, even hype busters like him are willing to buy into this wave of anticipation that has washed over every sector of society. Expectations are especially high at a place like Stanford Medicine, where the interests of industry and academia synthesize in a way that they do at few other medical institutions.

“If we utilize this moment of attention, it’s quite an opportunity,” Shah said, adding that it will take the best ideas being tested at the country’s 600 health care systems — no small task. “If we do that,” he added, “we will have pulled off a national experiment at a scale that no government agency or single company could have done. That is immensely exciting.”

Watch a Q&A on AI in health care with Stanford Medicine’s Nigam Shah and JAMA‘s editor in chief, Kirsten Bibbins-Domingo, here.

It’s a historic opportunity, and it raises questions about how to use AI in medicine responsibly, how to set realistic expectations for its potential and what part the humans behind the algorithms will play. Here are a few of the most pressing questions.

What is Stanford Medicine doing to ensure AI is used responsibly in research and health care?

Fears about AI are real, particularly in the sensitive world of health care. Could these new algorithms compound existing challenges such as bias in how people are treated based on their race and loss of privacy due to health data breaches? Could they ratchet up distrust in the health care system and those who provide care?

In June, a collaboration between the Stanford Institute for Human-Centered Artificial Intelligence, or HAI, and the School of Medicine resulted in the RAISE-Health initiative, whose mission is to “guide the responsible use of AI across biomedical research, education, and patient care.”

Co-sponsored by Lloyd Minor, MD, dean of the School of Medicine and vice president for medical affairs at Stanford University, and Fei-Fei Li, PhD, co-director of HAI and professor of computer science, the initiative brings together a diverse set of voices from across the Stanford community — ethicists, engineers and social scientists.

Feeding that knowledge pool are researchers like Tina Hernandez-Boussard, PhD, associate dean of research and professor of medicine, of biomedical data science and of surgery, whose work aims to ensure that diverse populations receive equitable resources and care. And that of Sanmi Koyejo, PhD, a RAISE-Health co-leader, whose work centers on fairness and the detection of potential bias in AI-aided analysis of medical imaging.

“AI is poised to revolutionize biomedicine, but unlocking its potential is intrinsically tied with its responsible use,” Minor said. “We have to act with urgency to ensure that this technology advances in everyone’s best interests.”

That will involve sound government regulation, something Sherri Rose, PhD, a professor of health policy, is shaping. Rose’s research has focused on making sure the interests of marginalized populations are considered in the rush of AI research.

And what about how we’re training a new generation — perhaps the first real AI generation — on how to deal with all of the new issues that AI in medicine is raising? There’s a fellowship for that. Through a program led by the Stanford Center for Biomedical Ethics, with funding from pharmaceutical company GSK, three postdoctoral fellows are exploring the ethical, legal and social considerations arising from the use of artificial intelligence in the pharma industry, from early-stage drug discovery efforts to its use in doctors’ offices.

How are we defining AI?

Between deep neural networks, machine learning, large language models and generative versus nongenerative AI — just for starters — the jargon of artificial intelligence can get fuzzy fast. Even the term “artificial intelligence” has multiple meanings.

“It depends who you’re talking to,” Shah said. “It can be in the mind of the beholder.”

Its original meaning is the imitation by computers of human intelligence. That’s a tall order — and might never come to pass. More often people use the term to refer to computers that can accomplish complex tasks, but there’s no clear divide between a plain old algorithm doing run-of-the-mill computing and an AI program. Is a pocket calculator AI? Most would say no. But the face recognition feature on a smartphone would make the grade.

Shah’s “AI 101” list of definitions looks like this:

- Machine learning is the basis for building algorithms or models by feeding a computer certain datasets. It is where all AI problem-solving begins.

- A neural network is the most complex form of machine learning, using all possible mathematical inputs with no constraints — and with multiple layers, it can become a deep neural network, producing what is known as deep learning.

- If you can take that algorithm or model produced by machine learning and generate data with it, it’s called generative AI. Ironically, ChatGPT, the buzz-producing model du jour, “makes stuff up,” Shah said. “That’s what it’s designed to do.”

- Large language models, such as the internet-fed GPT-4.0 and Bard, are the rocket fuel bringing generative AI to life.

- Shah is clearest on one point: Even if generative AI is responsible for all the attention, it will not factor prominently into medicine and health care any time soon because of the concerns around accuracy and trust.

- Nor, he said, will 90% of the algorithms being produced today.

“Ten years from now, we’ll be immensely grateful for the 10% that panned out and changed the science, the practice, or the delivery of care in medicine,” he said.

In what ways is Stanford Medicine taking the AI lead?

The formation of the Stanford Center for Artificial Intelligence in Medicine and Imaging (known as AIMI) in 2018, which brought together 50 faculty from 20 departments, spurred the wide range of interdisciplinary team science being done at Stanford Medicine in machine learning today.

Shah was among the first to embrace such collaboration. As Stanford Health Care’s chief data scientist, he is on the front lines of bringing AI from the research lab to the clinic. His team is looking for the immediate winners that could serve an important purpose for both doctors and patients.

At Stanford Health Care, the early focus with generative AI has been on how large language models can be used to communicate with patients. Can, for instance, AI support tasks like note taking so doctors can be more engaged with their patients? Can AI help organize patient care records — particularly for multiple providers — more efficiently?

“Paradoxically, I think AI will help take the computer out of the room and allow the two humans to make a closer human bond,” said Euan Ashley, MBChB, DPhil, associate dean and professor of medicine, of genetics and of biomedical data science. “And I think medicine will be better for that.”

Patricia Garcia, MD, a clinical associate professor of gastroenterology and hepatology, is leading a pilot program in which a generative AI tool based on ChatGPT automatically drafts responses to patients’ medical advice requests for clinicians to review, edit and send.

Russ Altman, MD, PhD, a professor of bioengineering, of genetics, of medicine and of biomedical data science, and an associate director of HAI and one of four co-leaders of the RAISE-Health initiative, has focused largely on AI’s applications for drug discovery and how those drugs will work on patients — along with the ethical implications involved.

Shah’s biggest concern centers on how the froth this AI buzz has generated is affecting the iteration process. A lot of what is being created, he said, is formed backward. Rather than follow what is known as a biodesign process — where need dictates solutions — the AI buzz has many researchers reaching blindly for the next great thing. “There’s a lot of poorly designed hammers looking for nails,” Shah said.

In what areas is AI showing the most immediate promise?

If Stanford Medicine’s AI imprint to date required a single-word summation, it would be imaging. Of the 500-plus AI algorithms approved by the FDA, 75% are radiology-focused and 85% are imaging-focused. At Stanford Medicine, AI innovation skews heavily toward imaging as well.

In radiology, imaging technologies such as X-ray and MRI are used to diagnose patients, and the field has produced one of the few robust and consistent datasets in medicine. The human genome is another. At their intersection lies a perfect example of the opportunity for discovery.

“AI can be, in some ways, superhuman because of its ability to link disparate data sources,” said Curtis Langlotz, MD, PhD, professor of radiology, of medicine and of biomedical data science as well as the director of the Center for Artificial Intelligence in Medicine and Imaging. “It can take genomic information and imaging information and potentially find linkages that that humans aren’t able to make.”

‘We’re at an interesting moment when we’ve demonstrated there is power — that computers can predict things that a human cannot.’

Euan Ashley

Langlotz, who’s also a co-leader of RAISE-Health and associate director of HAI, has been at the forefront of AI and imaging for many years. His lab feeds medical images and clinical notes into deep neural networks with the goal of detecting disease and eliminating diagnostic errors that could occur with only a doctor’s assessment.

As Langlotz puts it: “Cross-correlating massive datasets is not well suited to humans’ information-processing capability.”

Ashley, who directs Stanford Medicine’s Center for Inherited Cardiovascular Disease, has been pushing algorithms with similar success at the intersection of genetics and cardiology in an effort to improve the detection of cardiac risk. He said there is little doubt that highly trained computers can synthesize medical data in ways that humans cannot. But it’s now a matter of taking early methodology successes from the lab into clinical trials and proving they work in humans.

“We’re at an interesting moment when we’ve demonstrated there is power — that computers can predict things that a human cannot,” he said. “Now, how do we get it into medical practice?”

What are some of Stanford Medicine’s other AI focuses?

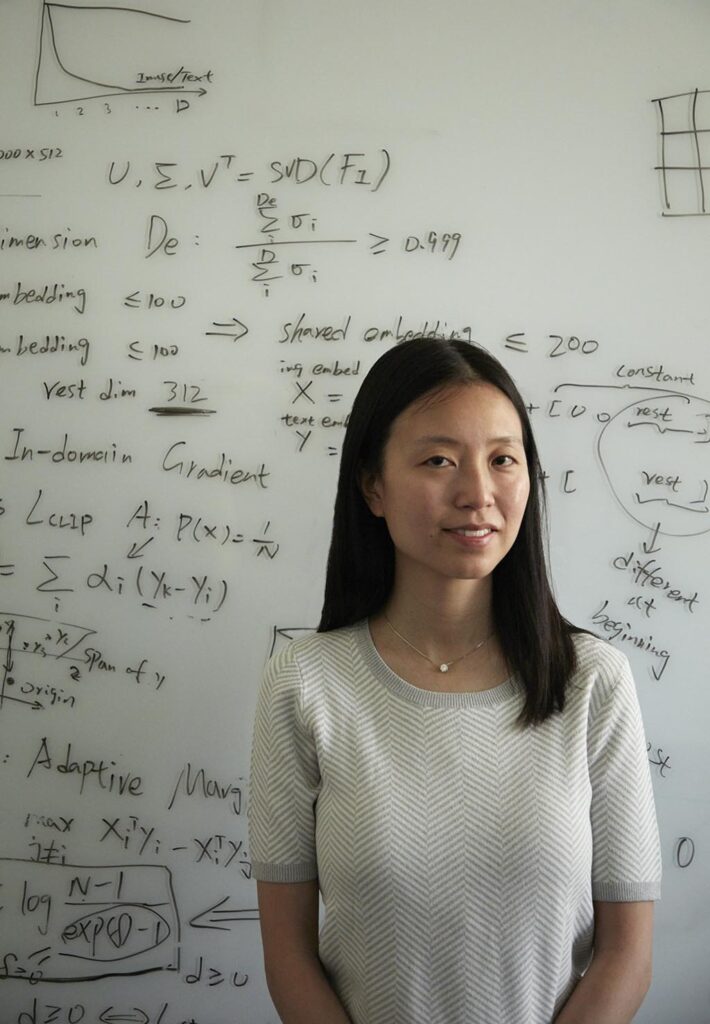

Christina Curtis, PhD, RZ Cao Professor of medicine, of genetics and of biomedical data science, is analyzing the molecular profiles of tumor samples and integrating routine pathology images to advance the standard of care for breast cancer patients. Adding detailed genomic data to the mix, clinicians might one day be able to pinpoint the best treatment for each patient.

“Currently, most cancer patients undergo sequencing only once they’ve developed treatment-resistant metastatic disease,” Curtis said. “There is a missed opportunity to have such information earlier in the disease course, at the time of initial diagnosis, both to compare a given patient to other similar patients and to monitor how the disease changes over time. This could enable more precise and anticipatory care.”

Sylvia Plevritis, PhD, a professor of biomedical data science and of radiology, is also one of the leaders of the RAISE-Health initiative. She developed a systems biology cancer research program that bridges genomics, biocomputation, imaging and population sciences to decipher properties of cancer progression. “Today, AI is completely changing the way we connect the dots between basic science research and clinical care,” said Plevritis, who is in the Stanford Medicine leadership group focusing on what this new path means for medical education.

“We’re having to rethink what scholarship and creativity are when we have tools that can write for us,” she said.

It’s one of the many unanswered questions that make this moment equal parts precarious and exhilarating. Here is a closer look at the work of some of the many Stanford Medicine humans trying to navigate the AI maze in search of answers.

FORCE

MULTIPLIERS

AI HELPS PEDIATRICIANS CHECK

HEART HEALTH

Speedier, easier heart-pumping assessment for children

An important indicator of the heart’s function is its ability to pump blood to the body — an action typically powered by the left ventricle. But estimating the amount of blood pumped with each heartbeat is time-consuming, and measurements can vary between cardiologists. This assessment has been automated for adults but not pediatric patients.

So, pediatric cardiologist Charitha Reddy, MD, teamed up with engineers and computer scientists to develop a model that automatically estimates the left ventricle’s function in children with accuracy and reliability. Medical decisions for children that rely on a doctor’s assessment of the heart’s ability to pump, such as determining safe chemotherapy doses, can benefit from models tailored to children, she said.

Reddy, a clinical assistant professor of pediatrics, helped collect heart ultrasound videos and annotate images from 1,958 pediatric patients seen at Lucile Packard Children’s Hospital Stanford. The model she helped develop analyzed more than 4,000 video clips of hearts and generated estimates of the left ventricle’s function with high accuracy. Its assessments of heart-pumping ability were speedier and more consistent than doctors’, said Reddy, the lead author of a study of the model published in February 2023 in the Journal of the American Society of Echocardiography.

“It performed essentially as well as a human doing the same measurements, while being less variable,” Reddy said. “The model could improve efficiency with this task or serve as an ‘expert’ when there isn’t one available.”

Clinicians could use the model to assess heart-pumping ability with confidence that the measurement is within about 3% of what they would measure themselves, Reddy said.

The algorithm could offer a template for AI models to assess the function of the right ventricle and the pumping ability of fetal hearts and hearts with structural abnormalities.

The model needs further testing before it’s put to use, but Reddy hopes it will someday help noncardiologists, such as parents of children with heart problems who live far from a hospital, screen for heart-pumping weakness. She also thinks it could improve care in rural areas with fewer cardiologists.

BETTER PHOTOS OF SKIN FOR

TELEHEALTH VISITS

In the age of virtual doctor appointments, this app could improve patient photos and expedite treatment

When the COVID-19 pandemic hit in 2020, Roxana Daneshjou, MD, PhD, was a resident in Stanford Medicine’s dermatology clinic, where she helped provide screenings for skin conditions, such as shingles, eczema and suspicious moles. But as the clinic was forced to pivot from in-person visits to telehealth, doctors had to rely on photos taken by patients, which were often hard to interpret. Daneshjou and her colleagues spent hours combing through blurry photos with bad lighting.

“I was reviewing these photos and thought, ‘I think we can develop an algorithm to assess this automatically,’” said Daneshjou, who was also researching how to apply artificial intelligence in health care. “Maybe it could help patients submit clinically useful photos.”

To that end, Daneshjou and a team of researchers collected images of skin conditions depicting a variety of skin tones and used them to train an algorithm to identify low-quality photos — and recommend ways to fix them. Funded by Stanford’s Catalyst program, which supports medical innovations on campus, Daneshjou developed the algorithm into a web app called TrueImage that patients can access from a smartphone or tablet. The idea is that patients will follow the app’s prompts to snap a photo that’s good enough for their doctor. TrueImage rejects low-quality images and tells patients to move to a brighter room, zoom in on their lesion or sharpen the focus. Currently, there’s a long wait for new dermatology appointments nationally, which the app could expedite by helping doctors quickly procure quality images, said Daneshjou, who is now an instructor of biomedical data science and dermatology.

But algorithms like hers matter only if humans actually use them and change their behavior, Daneshjou said. In a 2021 pilot study run in the clinic, she found TrueImage reduced the number of patients who submitted poor images by 68%. Now, she’s running a larger clinical trial to probe the app’s efficacy when patients use it at home.

If the trial confirms the app’s ability to improve photos that patients submit, the team will launch it in Stanford Medicine clinics. Daneshjou hopes the app can also help doctors outside of dermatology, like primary care providers, collect quality images as they screen for skin conditions.

CHEST SCANS CHANGE PATIENTS’ MINDS

AI analyzed repurposed chest CT images to identify calcium buildup in arteries, encouraging patients to make lifestyle changes

Cardiologist Alexander Sandhu has many patients who could benefit from a CT scan of their heart to detect the buildup of calcium inside their coronary arteries. The plaque is the strongest risk predictor of heart attacks. However, most patients opt out of this telling scan, often because their insurance doesn’t cover it. But a few years ago, Sandhu noticed a happy coincidence.

“Quite frequently, patients have already had a chest CT done for some reason totally unrelated to their heart,” said Sandhu, MD, assistant professor of medicine in the division of cardiovascular medicine. He wondered if artificial intelligence could discern valuable heart information in chest scans previously carried out for any reason — for example, to screen for lung cancer. “I thought, there’s this incredible opportunity to repurpose those scans and provide that information to patients and their clinicians.”

Along with his mentor, David Maron, MD, director of preventive cardiology at the School of Medicine, Sandhu helped design a deep learning algorithm that could assess the amount of calcium in patients’ coronary arteries from a chest CT scan with about as much accuracy as a scan ordered for that specific purpose. In 2022, the team tested the algorithm in 173 patients, most of whom were at high risk for heart disease but were not taking statin medications, which are known to decrease the risk of heart attack and stroke.

They found that when they notified the patients and their primary care physicians about the calcium detected and showed them images of the white deposits in the patients’ arteries, 51% of them started a statin medication within six months. That’s about seven times the statin prescription rate the team observed in a similar group they did not notify, Sandhu wrote in an analysis published in November 2022 in the journal Circulation. (At the end of the study, the researchers notified all patients with coronary calcium.)

“These are usually patients who have no symptoms, and this provides motivation to make lifestyle changes and take statin medications,” Maron said.

In 2023, their research was awarded the James T. Willerson Award in Clinical Science and a Hearst Health Prize. The notification system is currently in use at about 50 U.S. hospitals, Sandhu said.

Sandhu, Maron and their research collaborators are now planning a clinical trial to test whether the system prevents heart attacks and strokes.

COULD AI RIVAL

AN EYE SPECIALIST?

An AI model could predict whether patients will need eye surgery to prevent vision loss

When it comes to glaucoma, patients’ eyes are fine — until they’re not. Many with this eye disease remain asymptomatic for years. Yet others with the condition rapidly progress toward irreversible blindness — and may need surgery to prevent it.

“It’s not easy to figure out which patients are which,” said glaucoma specialist Sophia Wang, MD, an assistant professor of ophthalmology at the School of Medicine.

Wang wondered if AI could help. Recently, language-processing technology has opened doors to swiftly analyze doctors’ notes, unearthing a wealth of information — such as family history — to aid in predicting which glaucoma patients will need surgery soon.

Wang and her collaborators trained a two-pronged AI model to “read” doctor’s notes and weigh clinical measurements from 3,469 glaucoma patients to understand what health factors — captured by measurements of eye pressure, medications and diagnosis codes — seemed to indicate that they would need surgery in the coming year. The idea is that doctors could use the model to flag riskier patients and start them on aggressive treatments to prevent vision loss, Wang said.

The model can tell doctors a patient’s chance of needing surgery within the year with more than 80% accuracy, according to a study published in April 2023 in Frontiers in Medicine. Doctors would likely want to act quickly if the model predicted a high chance that a patient would need surgery, whereas a patient with a low chance could potentially be safely monitored with less invasive treatment options. While it’s not perfect, it gives doctors a sense of how worried they should be about the patient, said Wang, the study’s senior author. Surprisingly, she found the model was more accurate than her own assessments.

“If I review 300 notes and try to predict who is going to need surgery, my performance is abysmal, even as a highly trained glaucoma specialist,” Wang said. The different, disparate details in health records don’t necessarily intuitively add up to needing surgery, Wang said, making the prediction especially difficult for doctors. “The model far outperforms humans in this task.”

But before the model can be used in the clinic, Wang and her team want to further refine it by incorporating imaging data. They also plan to test the model on large cohorts of patients who are racially, ethnically and socioeconomically diverse and live in various regions of the United States.

PATIENT CARE

IMPROVING EQUITY IN

HEART ATTACK SCREENING

Together, humans and AI could screen for heart attacks more precisely and equitably

Emergency department protocol is clear. When patients arrive with signs of a heart attack, the ED team has less than 10 minutes to assess them with an electrocardiogram, or EKG, to determine whether a blockage is reducing blood flow to their heart. As the seconds increase, so does a patient’s risk of permanent heart damage.

That’s a lot of pressure on the staff registering incoming patients to make the right call, said Maame Yaa A. B. Yiadom, MD, associate professor of emergency medicine. She was curious if AI could reduce delays in care. “We intended to build a model that could outperform humans in this screening task,” Yiadom said.

Funded by HAI, Yiadom’s lab — including a team of statisticians, data scientists, medical informaticists and emergency medicine physicians — created a predictive model that could determine within 10 minutes whether an ED patient should be tested for a heart attack upon arrival with an EKG.

To assess the model’s performance, they used it to analyze electronic health records from 279,132 past visits to Stanford Hospital’s ED. Using information such as patient age, chief complaint and other routinely collected data the model predicted which patients would go on to be diagnosed with an adverse heart event, meaning they should be screened with an EKG. The model wasn’t perfect — it missed capturing 18% of cases, but it outperformed ED staff screening, which typically missed about 27%.

Still, it wasn’t the solution Yiadom’s team was hoping for.

“Our biggest surprise was that the model was biased when it came to race,” she said. In their study, the model was worse at detecting heart attack risk in Native American, Pacific Islander and Black patients than in white patients. The team observed that members of these historically disadvantaged populations tend to have heart attacks at younger ages, and the model generally categorized young patients as low risk, Yiadom said.

“There’s something that the humans are doing that is introducing equity into the screening. We don’t want to throw that away,” Yiadom said.

So, she and her team tested a “fail safe” screening approach, in which patients would get their hearts tested if either the staff or the AI model indicated a need. The human-AI combo method performed better than either alone, missing only 8% of cases while showing little variation between patients of different sexes, races, ethnicities and ages, Yiadom wrote in a study published in June 2023 in the journal Diagnostics.

She intends to test the combined method in clinics to ensure that it doesn’t impede ED processes and that it functions equitably in a real setting. Yiadom is also designing a new AI model that she hopes will be unbiased by accounting for the range of ages at which heart attacks occur in different populations.

AI COULD IMPROVE

SURGERY PERFORMANCE

AI could act as an expert colleague to assess surgery skills

What if, like athletes, surgeons could improve their technique based on insights from video footage? Serena Yeung thinks it could be possible with a little automated help.

“There’s potential to provide continuous feedback to trainee surgeons,” said Yeung, PhD, an assistant professor of biomedical data science. “With surgery, what’s influencing patient outcomes is directly observable to the eye.”

Many surgeries are recorded, especially those in which surgeons operate using a robot. In these procedures, surgeons control robotic arms to operate with more precision and dexterity than human hands can achieve. Robotic surgery video feeds can be reviewed by senior surgeons to evaluate trainees’ technique, but surgical skill assessments like these are often time-consuming and vary based on the evaluator. Yeung, an expert in artificial intelligence, learned of this problem from surgeon Brooke Gurland, MD, clinical professor of surgery, and developed an AI-powered algorithm to help.

The model analyzed tool movement in 92 robotic surgeries. It then evaluated clinicians’ surgery skill, based on surgical technique and efficiency. Six expert surgeons watched those same videos and provided their own assessments. The model’s assessments of skill aligned with expert ratings, with high accuracy, Yeung and her colleagues reported in the journal Surgical Endoscopy in April.

The idea is that trainee surgeons could use the model to get frequent, objective feedback on their technique, Yeung said.

“I think the model is very close to being useful for training and skills assessment,” she said.

She’s working on bringing similar algorithms into medical schools to help evaluate the hand movements that residents make while learning to perform new surgeries.

Next, Yeung hopes AI can “learn” to give live feedback to surgeons and prevent mistakes during operations, acting like an expert colleague. For example, she’s conducting research with longtime collaborator Dan Azagury, MD, associate professor of surgery, to see if AI can analyze the video feeds of many operations and identify which aspects of surgeon technique may be more strongly linked with excessive blood loss. The work is funded in part by the Clinical Excellence Research Center at Stanford Medicine.

Such models could be particularly impactful in communities with few surgeons, where experts — and their time to train residents — are limited, Yeung said.

“I think there’s so much more that we can do here. This is just the start,” Yeung said.

AI CAPTURES WHY SOME PEOPLE DON’T USE

HEART MEDICATION

By flagging reasons patients aren’t on heart medications, AI could inform equitable solutions

Cardiologist Fatima Rodriguez, MD, remembers when a colleague in Stanford Health Care’s preventive cardiology clinic said: “All of our patients with heart disease that should be on statins are on them, correct?”

Rodriguez paused. Though statin medications are generally safe and effective at reducing the chance of heart attack and stroke, many patients who are at risk — such as those with heart disease and diabetes — aren’t taking them. “You know what? I really don’t know the answer to that. Let me look into it,” Rodriguez, an associate professor in cardiovascular medicine, had said.

So she and others helped train multiple AI models to determine whether a patient had a statin prescription, and if they didn’t, why not. The scientists refined a natural language processing model that had been trained to recognize and interpret clinical language in doctors’ notes.

They developed different versions of the model by further training it on thousands of electronic health records from Northern California patient populations with an increased heart attack risk — such as those with cardiovascular disease or diabetes — and showed that the updated models parsed out instances of statin nonuse and the reasons patients weren’t taking them with high accuracy.

Using one of the updated models, the team found that about half of 33,461 diabetes patients in the Northern California cohort who could have benefited from statins weren’t taking them — among them, Stanford Health Care patients. Patients in the cohort who were younger, female and Black had a disproportionately low level of statin use, Rodriguez reported in a study published in March 2023 in the Journal of the American Heart Association. The model flagged reasons for nonuse, ranging from personal concerns about side effects to roadblocks within clinics.

“Language barriers affect a lot of the Hispanic patients I treat,” Rodriguez said. “Are we effectively communicating the importance of these medications?”

Rodriguez hopes that algorithms like these could inform targeted programs to increase statin use equitably. Perhaps system-level solutions, such as allocating extra appointment time for health care providers to educate patients, would increase statin use and take the onus off cardiologists to follow up with their patients outside of clinic hours, she said.

The trick is to think broadly to help patients seek out and use the prescriptions they need. “There isn’t one solution,” Rodriguez said. “There will be different solutions that work for different people.”

RESEARCH

AI GUIDES PROTEIN EVOLUTION

An algorithm can speed up evolution of proteins to target viruses, diseases

Evolution is inefficient. Random genetic mutations that lead to improved protein fitness are rare but powerful. Scientists who hope to harness the power of evolution to create beneficial proteins would like to speed up the process — to promote the evolution of, for example, antibodies that bind to and flag nasty viruses. But for each protein, the variations are nearly endless. Identifying the best combination of amino acids, the building blocks of proteins, is like combing through all the atoms in the universe, said Brian Hie, PhD, a postdoctoral scholar in biochemistry.

Hie was curious if AI could narrow the search for protein variants that can do their jobs better. This could save scientists time and money when they’re testing potential proteins for new therapies, Hie said. “For example, about half of today’s blockbuster drugs are antibody-based,” he added.

Hie brought the idea to his faculty adviser, Peter Kim, PhD, the Virginia and D.K. Ludwig Professor in Biochemistry.With collaborators, they developed an algorithm that ran on language models trained on many sequences of amino acids, making up proteins. The six models they used had been trained on datasets that hold, altogether, the amino acid sequences for more than 100 million proteins from humans, animals and bacteria.

“It’s basically the equivalent of ChatGPT, only instead of feeding it English words, the model was fed proteins’ amino acid sequences,” Kim said. “From these protein sequences, the computer identifies patterns that we can’t see.”

In a proof-of-concept test, the models guided evolution of human antibodies that bind to coronavirus, ebolavirus and influenza A. Within seconds, each model analyzed thousands of protein variants and recommended a handful of amino acid changes. The models agreed on a few that ultimately improved the antibodies’ ability to bind to their target virus.

The models essentially learned the rules of evolution, rejecting amino acid changes that caused misfolded proteins and prioritizing changes that made them more stable or improved their fitness for a given purpose.

Hie hopes the models can help evolve antibody drugs for cancer and autoimmune and neurodegenerative diseases. Other Stanford University scientists are testing their utility on different protein types, such as enzymes.

“There’s a vast universe of proteins,” Hie said. “It’s really exciting to think of the possible applications — from DNA editors to climate-related atmospheric CO2 removers.”

INTRODUCING THE ‘MORPHOLOME’

AI helps reveal unique cell shapes, informing therapies

When scientists study cells, they look at them in a few different ways. Often, they gather clues from different groups of molecules, such as DNA or proteins — or the genome and proteome, respectively — to look for signs of health and disease. Recently, Stanford Medicine scientists added a new category to the list — the “morpholome,” which refers to the full gamut of shapes taken on by an organism’s cells. They’ve developed a technology to capture how various cells look in normal and disease states and also sort them according to their morphologies.

Until now, cells’ shapes have largely been left out of biomedical research, said Euan Ashley, MBChB, DPhil, the director of the Stanford Center for Inherited Cardiovascular Disease.

“It’s like studying a population of humans by looking at the genome, their RNA, their proteins, but never stopping to look at the body,” he said. “If we did, we’d find that some are 7 feet tall, and some are 3 feet tall. Just like us, cells have so much diversity in morphology, and they’re constantly in motion.”

About a decade ago, Ashley and Maddison Masaeli, PhD, a postdoctoral scholar in Ashley’s lab, began developing a technology to make cell morphology more amenable to study. Artificial intelligence expert Mahyar Salek, PhD, then a computer scientist at Google, joined the team, and they started programming an AI model to organize images of cells into categories based on measurements of their shape and developmental stage, such as circularity and size. The resulting model can analyze images of live cells from a tissue sample and group cells with similar shapes together using a multipurpose neural network. The program delivers this information to a machine that filters the tissue sample so scientists can identify cell populations of interest and isolate them based on their morphologies.

In 2017, Ashley, Masaeli and Salek founded a company, Deepcell, that produces and sells the platform to researchers. This summer, the technology came back home to Ashley’s lab through a beta-testing program, and Ashley used it to study cells from patients with inherited heart disease.

By analyzing the heart cell morpholome, he hopes to differentiate the shape of diseased cells from others that have been “cured” by gene-editing technology. The discrepancy in features between the diseased and restored cells could inform heart disease therapies, Ashley said.

AUTOMATING INCLUSIVE TRIALS

AI helps broaden clinical trial pools so they are larger, more inclusive

Clinical trial eligibility criteria can have unintended consequences. These criteria are meant to exclude patients who might have adverse reactions to experimental therapies and allow the study’s outcome to be interpreted with confidence, but the rules are sometimes so strict that drug trials cannot recruit enough participants.

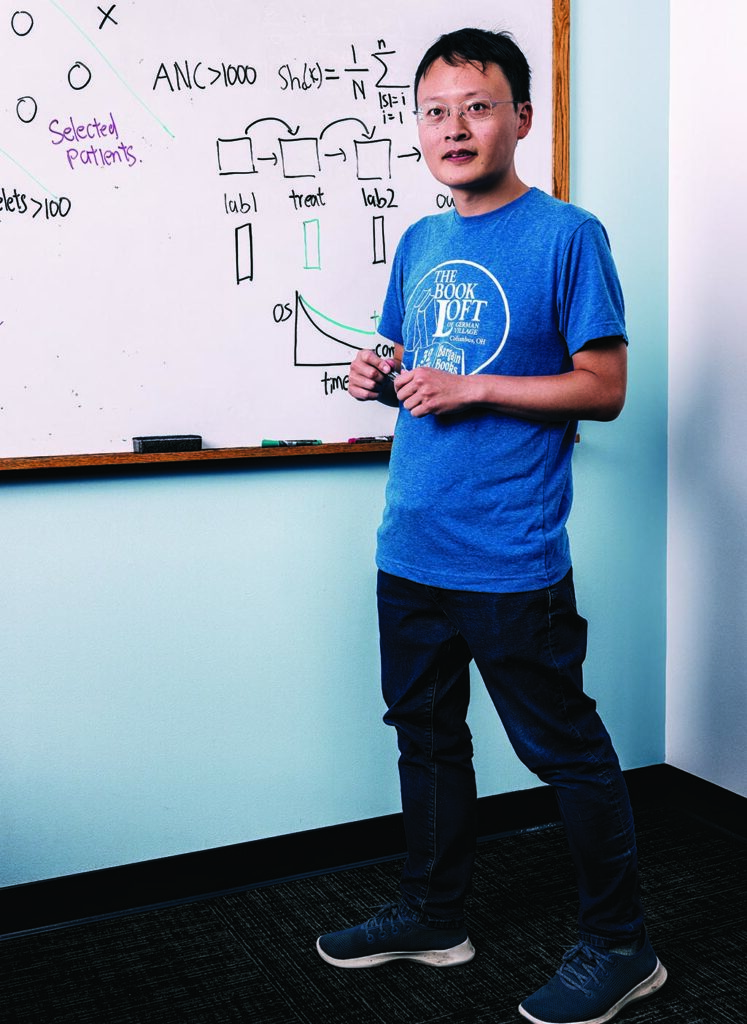

“Many of these eligibility rules are looking for so-called Olympic athletes, or the healthiest among the patients who have the disease,” said James Zou, PhD, an assistant professor of biomedical data science. “This hurts the robustness of the trial because they may not reflect how drugs work on average patients like us.” Additionally, the narrow range of acceptable lab results and the exclusion of people with additional medical conditions tend to rule out female, elderly and nonwhite participants more than others, he said. This could be, in part, because passable lab results are often set based on values common in healthy white males, Zou added.

Expanding the clinical trial pool could expedite drug trials and lead to results that more accurately reflect the efficacy and safety of new treatments. Along with Ruishan Liu, a graduate student; Ying Lu, PhD, a professor of biomedical data science; and collaborators from the biotechnology company Genentech, Zou designed an artificial intelligence algorithm that evaluates patient health records against eligibility criteria for trials and makes recommendations to help scientists enroll more participants without compromising their safety.

To test the algorithm, called Trial Pathfinder, the team first had it look at electronic health records from hundreds of thousands of patients with cancer and select those eligible to enter lung cancer clinical trials given current criteria. Then, it relaxed the values for each health metric, so the simulated trial included people who were more representative of the population with the disease, while maintaining a low “hazard ratio,” meaning they would be likely to live longer while taking the treatment rather than a placebo.

“On average, using Trial Pathfinder’s recommendations, we can more than double the number of eligible patients,” Zou said. “And when we enroll these additional patients, they sometimes are likely to benefit more from the treatment than the narrow slice of patients who were previously recruited.” Zou and his colleagues published their study in April 2021 in Nature.

Trial Pathfinder was particularly successful at enrolling more lung cancer participants when it expanded the range of acceptable values on lab tests, such as platelet and hemoglobin levels.

The model was similarly successful in recruiting patients for other clinical trials, including for therapies for colorectal and metastatic breast cancer. “It turns out that many learnings are generalizable across cancer types,” Zou said. The technology earned him a Top Ten Clinical Research Achievement Award from the Clinical Research Forum in 2022.

Now, Genentech is using the algorithm to help design clinical trials, and Zou is working on similar algorithms that can be applied to conditions beyond cancer, such as autoimmune and infectious diseases.

Mark Conley is an associate editor on the Stanford Medicine’s content strategy team. Contact him at mjconley@stanford.edu. Anna Marie Yanny is a freelance writer. Contact her at medmag@stanford.edu.